Quarterly full-scope access reviews look rigorous on paper, but they waste time and miss risk in practice. A smarter path is statistical sampling for access, where you stratify by risk, pull a defensible sample, and review deeply with context. You get equivalent assurance, less reviewer fatigue, and cleaner evidence for auditors.

I have not met a single team that loves their current review cycles. Everyone is drowning in spreadsheets and screenshots. Half the decisions are guesses because context is missing. Then leadership wonders why standing access hangs around for months. The fix is not more policy. The fix is putting sampling and evidence where the work already happens, then enforcing expiries by default.

Key Takeaways:

- Replace full-scope reviews with risk-stratified sampling to keep assurance high and work low

- Size your samples to 90 to 95 percent confidence with a clear, repeatable calculation

- Build a compact evidence pack so reviewers decide in under two minutes

- Tie revocations and fixes back to the ticket to make audits a byproduct, not a project

- Document your sampling method in plain English so auditors can retrace every step

- Run time-bound access and Auto Reclaim to shrink standing privilege between campaigns

Stop Full-Scope Reviews: Statistical Sampling for Access Beats Checkbox Audits

Full-scope access reviews look safe, yet they create noise, delay real remediations, and burn people out. Statistical sampling for access, done with risk stratification, gives the same audit confidence with a fraction of the effort. Auditors care about design and evidence, not volume for its own sake.

Most teams equate “more reviewed” with “more secure.” The reality is different. When every manager gets a list of 600 users, they rubber stamp to survive the week. Signal gets buried. Risk stays. With a risk-based sample, you inspect the right slices deeply, prove control effectiveness, and free time to fix what matters.

Why full-scope reviews waste effort

Reviewers spend minutes per user hunting context, then default to keep. Multiply that by thousands. The team loses days, and the true problems hide in the pile. I have seen managers approve access for people who left months ago because the list was too long to check carefully.

Auditors do not require you to click every name, they require reasonable assurance. That is a key distinction. Reasonable assurance can be proven with a documented sampling methodology, consistent execution, and strong evidence on each sampled item. You trade shallow coverage for deep, defensible checks.

What auditors actually need to see

Auditors want three things: a clear method, evidence it ran as designed, and proof you acted on exceptions. They do not need heroics. They need traceability. If your plan says “90 percent confidence with 5 percent margin of error on high-risk apps,” your campaign and evidence must mirror that.

That is why context matters. Last login, job title, group memberships, request history. When reviewers see those in one place, decisions speed up and accuracy goes up. Pair that with clear revocation workflows and your audit story writes itself.

Why Statistical Sampling Belongs Inside Jira Workflows

Statistical sampling works best when it lives inside the tools your teams already use. Jira manages intake and status. Slack moves decisions quickly. The identity provider executes the change. Keeping sampling in workflow closes the loop and preserves evidence at the source.

A separate governance portal splits the process. Requests start in Jira, approvals float in chat, changes happen in the IDP, and evidence lives in screenshots. That fragmentation is the real cause of slow access and messy audits. Put the review, decision, and change on the same ticket and the system reinforces itself.

Symptom vs root cause in access reviews

The symptom looks like “slow, painful certifications.” The root cause is split context. Managers do not have usage or group data when reviewing. IT does not see reviewer intent when provisioning. Auditors cannot tie evidence back to the request that started it.

When those pieces stay together, reviewer accuracy improves. Access that should end, ends. Access that should stay, stays. And the platform can prove it without a spreadsheet rebuild every quarter.

Sampling inside workflow, not after the fact

Sampling done as an offline project creates rework. You export users, chase decisions, then try to line up outcomes with changes after the window closes. That is backwards. Embed the sample in Jira issues, route to owners, then execute revocations through the IDP as reviewers click Revoke.

The benefit is compounding. Every decision leaves a trail in the ticket. Every change is authoritative because it flowed through the identity provider. Your next audit pulls from the same source of truth as your daily work.

The Cost Case for Statistical Sampling in Access Reviews

Sampling reduces cost because you review fewer items more effectively. Time per decision drops when context is one click away, and total decisions drop because you are not chasing low-risk noise. The net result is hours back, fewer licenses wasted, and faster cleanups.

Put numbers to it. If each manual decision costs three minutes and a manager gets 600 names, that is 30 hours for one person. A risk-stratified sample might cut that list to 90 names. With better context, decisions fall to 90 to 120 seconds. That is under three hours, and those three hours fix real risk.

False positives and reviewer fatigue

Overlong lists push reviewers into autopilot. That inflates false positives, where unneeded access gets kept. Fewer, better-context decisions shrink that error rate. It is the same logic behind audit sampling standards like PCAOB AS 2315 and AICPA guidance on audit sampling. You can defend the plan and the result.

Reviewer fatigue is more than an annoyance. It is a control failure in slow motion. Sampling prevents fatigue by setting a clear, bounded task with reliable context and a tight evidence pack, especially when evaluating statistical sampling for access.

Budget and opportunity cost

All that manual review time is not free. It crowds out system hardening, onboarding improvements, and license optimization work. Meanwhile, unused licenses keep billing because no one had the time to run down inactivity.

Sampling flips the allocation. You review fewer items, so you can invest the saved time in remediations and policy cleanups. Pair that with inactivity-driven reclamation and you claw back real budget, month after month. Baseline expectations for access governance are set in frameworks like NIST SP 800-53 AC-2. Sampling helps you meet them without busywork.

What Reviewers Feel During Access Certifications for Statistical sampling for access

Reviewers are not lazy. They are overloaded. Giant lists, thin context, and unclear next steps create guesswork. People default to safe choices that keep access intact, even when it should end. Sampling shrinks the list and gives each decision the attention it deserves.

You can feel the stress in the process. A manager opens a spreadsheet, sees 200 names they barely recognize, and realizes this will eat the afternoon. Then the questions start. Who is this person, when did they last log in, what is this group even for. Without answers on the screen, the only rational move is keep.

The 11 pm certification scramble

End of quarter hits. Someone realizes reviews are due tomorrow. A flurry of messages starts. People copy paste screenshots, beg for extensions, and approve by muscle memory. I have lived that week. No one wants to repeat it.

A smaller, risk-focused list avoids the scramble. You can schedule campaigns earlier, route to the right person, and finish in days instead of weeks. People actually engage because the task is reasonable.

Manager guesswork without context

Managers know their team’s work, but they do not memorize every group across every app. Asking them to validate access without usage, title, and history is setting them up to fail. With the right context on the review screen, accuracy rises and speed follows.

That is the engine here. Better information, fewer items. Reviewers shift from “I hope this is right” to “I can prove this is right.”

How to Run Risk-Stratified Statistical Sampling for Access Reviews

A defensible sampling plan starts with risk strata, not math. Segment users by app sensitivity and role criticality, then size samples per stratum to reach 90 to 95 percent confidence with a practical margin of error. Document the method so anyone can retrace it.

The math is straightforward once the strata are set. High-risk apps and privileged roles get larger samples, lower-risk Viewer roles get smaller ones. You are balancing assurance and effort. The point is not a perfect sample, it is a reproducible one aligned to risk.

Stratify by risk, then sample

Start simple. Define three strata: high, medium, low. High might include production systems and admin roles. Medium might be internal business apps with edit rights. Low might be read-only access in low-sensitivity tools.

Two paragraphs in, you can already pick smarter battles. A 25 percent sample in high risk tells a stronger story than a 5 percent sample across everything. That is the heart of it. Focus where it hurts if it fails.

How to size your sample confidently

You need a repeatable formula, not a guess. A common approach uses finite population correction with expected error rate, confidence level, and margin of error. Pick your confidence, pick your margin, then compute per stratum.

A quick, practical flow:

- Choose confidence and margin per stratum, for example 95 percent and 5 percent for high risk, 90 percent and 7 percent for medium, 90 percent and 10 percent for low.

- Estimate an expected exception rate, even if conservative, for example 5 percent, especially when evaluating statistical sampling for access.

- Calculate the sample size per stratum using a standard equation with finite population correction.

- Randomize the selection, lock the list, and record the seed so it is reproducible.

The reviewer evidence pack

Speed comes from preparation. Reviewers should see, on one screen, the user’s job title, department, manager, groups, last login, and the original request ticket. Add a short rationale prompt and one-click Keep or Revoke.

A small checklist helps prevent misses:

- Show last login, group memberships, and role in plain language

- Surface recommendations on obvious cases, for example inactive 90 plus days

- Capture rationale on revokes in a required short text field

- Write every action back to the ticket for audit

Ready to reduce review load without losing assurance? Learn more about Multiplier.

Making the New Way Real in Jira: Multiplier for Access Reviews and Revocations

The method works best when the system enforces it. Multiplier brings sampling, reviews, and revocations into Jira and Slack, then executes changes through your identity provider. That keeps approvals fast, decisions accurate, and evidence complete on the ticket.

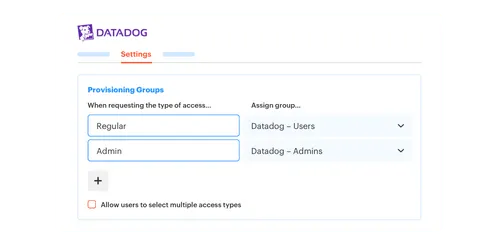

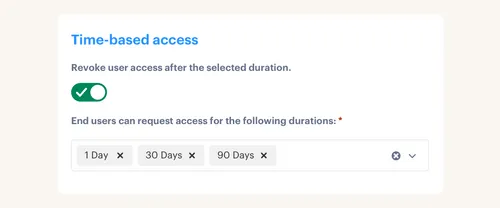

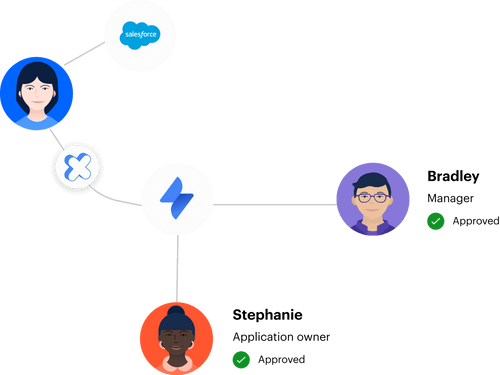

Access Reviews run as Jira-native campaigns. Reviewers see usage and attributes, click Keep or Revoke, and Multiplier removes users from IDP groups automatically when you choose Revoke. Time-Based Access makes elevated roles temporary by default, so standing privilege shrinks between campaigns. Approval Workflows route decisions to managers or app owners in Slack, then Automated Provisioning applies changes through Okta, Entra, or Google Workspace.

Access reviews in Jira, not spreadsheets

Multiplier’s Access Reviews replace ad hoc exports with in-portal campaigns. You select in-scope apps, assign reviewers, and launch. Reviewers land in JSM, see last login, title, groups, and a recommendation, then act. Keep and Revoke decisions write to the issue, and Revokes remove group membership through the IDP.

The payoff is twofold. Decisions get faster because context is present. Evidence gets stronger because every click ties to a change. When auditors ask how you sized the sample and what changed, you have a single record that answers both.

Time-bound access and automatic cleanup

Least privilege sticks when expiry is automatic. With Time-Based Access, requesters pick a short window for elevated roles, then Multiplier removes the group membership when the timer ends. No calendar reminders, no follow ups.

Auto Reclaim handles the waste side. Inactive users receive a warning email, then access is removed if they do not log in during the grace period. A ticket documents the change. Finance sees savings. Security sees reduced exposure.

70 percent fewer clicks per review, and revocations executed without chasing people. See how Multiplier works.

Provisioning through your identity provider

Approvals do not mean much if changes drift. Multiplier provisions by adding or removing users from identity provider groups, then SCIM or SAML flows apply the app-side entitlements. That keeps the IDP authoritative and your audit trail clean.

Approvers act in Slack or JSM. Multiplier transitions the ticket, calls Okta, Entra, or Google Workspace, then posts success or error back to the issue. No screenshots required. No side channels to reconcile.

Bring the reviewer screen, the approval, and the change into one system you already run. Get started with Multiplier.

Conclusion

Full-scope access reviews are performative rigor. A risk-stratified sampling plan delivers real assurance without burning your team. When you put statistical sampling for access inside Jira, route approvals in Slack, and drive changes through the identity provider, audits become a byproduct of normal work.

Do this well and you cut reviewer workload by up to 70 percent while maintaining 90 to 95 percent confidence in your results. The bonus is real cleanup. Expiries enforce themselves, idle licenses get reclaimed, and every decision links to evidence. That is the outcome that matters.

Frequently Asked Questions

How do I create an access review campaign in Multiplier?

To create an access review campaign in Multiplier, follow these steps: 1) Go to the Access Reviews section in Jira. 2) Click on 'New Review' and fill out the form with the campaign name, applications to include (only those marked as Approved), and the start and end dates. 3) Assign reviewers for each app, then click 'Create Access Review' to launch the campaign. This process transforms your access review from a manual task into a streamlined workflow within Jira, making it easier to manage and audit.

What if I need to adjust the sample size for access reviews?

If you need to adjust the sample size for your access reviews, you can do this by modifying the parameters in your sampling method. Start by defining your risk strata based on application sensitivity and role criticality. Then, calculate the sample size for each stratum to ensure you achieve the desired confidence level, typically between 90 to 95 percent. Document these adjustments clearly so that anyone can retrace the steps taken.

Can I automate access provisioning with Multiplier?

Yes, you can automate access provisioning with Multiplier. Once an access request is approved, Multiplier automatically calls your identity provider (like Okta or Azure AD) to add or remove users from the appropriate groups. This eliminates manual steps and reduces the risk of errors. Just ensure that your identity provider is properly integrated with Multiplier to take full advantage of this automation.

When should I use time-based access with Multiplier?

You should use time-based access with Multiplier when you want to enforce least privilege by making elevated access temporary. This is especially useful for high-risk roles or sensitive applications. During the access request process, users can select a duration for their elevated access, which is automatically revoked once the time expires. This helps minimize security risks and reduces unnecessary license costs.

Why does reviewer context matter in access reviews?

Reviewer context is crucial in access reviews because it provides the necessary information to make informed decisions. When reviewers have access to details like last login dates, job titles, and group memberships, they can quickly assess whether to keep or revoke access. Multiplier enhances this process by displaying all relevant context in one place, which speeds up decision-making and improves accuracy.