90-day audits get messy for one reason more than any other: the work happened in 4 places, but the evidence is expected in 1. And if you felt that this week, chasing approvals in Slack, checking Okta, updating Jira, then rebuilding the story in a spreadsheet, you already know the importance of automation in access reviews.

A lot of teams think audits are a reporting problem. I don't buy that. They're an operating model problem first. If the review, the decision, the revocation, and the proof all live in different places, you're not running a review program. You're running an evidence reconstruction exercise.

Key Takeaways:

- The importance of automation in access reviews shows up fastest when you look at revocation speed, audit evidence quality, and reviewer completion rates.

- If access reviews still depend on spreadsheets, your team is auditing memory, not access.

- A simple rule works well: if a reviewer clicks revoke, the system should remove access in the same workflow, not in a separate follow-up ticket.

- Usage context matters more than reviewer effort. Last login, group membership, job title, and owner data cut rubber-stamping fast.

- Governance works better inside Jira and Slack because that's where the work already happens.

- Separate IGA portals often add control on paper and delay in practice.

- The best automation doesn't remove human judgment. It removes manual handoffs.

Why Manual Access Reviews Break Down So Fast

Manual access reviews break down because the review itself isn't the hard part. The hard part is everything around it: finding the right data, routing the right person, enforcing the decision, and proving it later. That's the real importance of automation in access reviews. It takes the admin work out of the control.

Picture a mid-market IT team at quarter end. One person exports users from the identity provider. Another cleans the file. App owners get a spreadsheet by email. Half of them miss it. Someone follows up in Slack. A reviewer marks "remove" on 14 users, but nobody actually removes them until a week later. By the time the auditor asks what happened, the answer is spread across CSVs, chat threads, and Jira comments. It's exhausting, and honestly, it's where good intentions go to die.

The review is not the workflow

Most teams treat the review file as the workflow. That's the first mistake. A spreadsheet is just a container. It doesn't know who should review what, it can't enforce revocations, and it definitely can't create a clean trail on its own.

I think this is where a lot of compliance programs quietly fail. They confuse visibility with control. Seeing a list of users isn't the same as governing access. The Review-to-Revoke Gap is the real metric that matters here: the time between a reviewer deciding access should be removed and the system actually removing it. If that gap is more than 24 hours for sensitive apps, your review process is weak, even if your spreadsheet looks organized.

And this gets worse with growth. One fast-growing company in fintech had hundreds of routine access requests arriving through Slack, email, and Jira. Their IT team was stuck chasing approvals and manually assigning Okta groups. That kind of setup doesn't just slow requests. It trains the team to accept messy evidence as normal.

Separate portals create a second queue

A separate governance portal sounds tidy on paper. In practice, it often creates a second queue your team has to maintain. Jira has the ticket. Slack has the conversation. The identity provider has the real entitlement. The portal has the certification task. Now everybody is doing swivel-chair work.

That's why I keep coming back to one contrarian point: identity governance belongs in Jira. Not because portals are useless. They do have a place in some large, complex environments. Fair point. But if your team already runs service delivery in Jira Service Management, adding another portal usually creates more context switching than control.

Use a simple threshold here. If more than 20% of your review tasks require someone to leave their normal workflow just to finish the review, your design is already fighting adoption. And if adoption is weak, your audit evidence will be weak too. The next question is obvious: what exactly are you trying to automate?

The Real Problem Isn't Certification. It's Decision-to-Action Lag

The real problem isn't that reviewers won't certify access. It's that their decisions don't reliably turn into action. That's a completely different problem, and it changes how you think about the importance of automation in access reviews. You're not just trying to speed up attestations. You're trying to close the loop.

Back when teams were smaller, you could survive with a loose process. A manager knew most people by name. A reviewer had context in their head. Somebody in IT could clean things up manually after the fact. Once you get past a few hundred employees, that falls apart. Memory stops scaling. Process has to take over.

The hidden bottleneck is missing context

Ask a reviewer to approve or revoke access with no context, and you'll get one of two outcomes. They delay. Or they rubber-stamp. Neither is good. The Context Floor Framework is simple: every review decision should include at least 4 data points before the reviewer acts. Last login. Current group membership. Job title or department. App owner or reviewer assignment. If you're below four, the decision quality drops fast.

That's not theory. It's just human behavior. If I'm reviewing 60 users for a tool and I can't see whether they logged in last week or last quarter, I'm guessing. And a guessed certification is still a bad certification, even if it gets submitted on time.

Some teams push back here and say more context creates more complexity. That's valid in small environments. But once a campaign crosses 100 users or 10 apps, missing context costs more time than showing it. You spend that time in Slack, in comments, in side conversations, in follow-ups. Same work. Worse format.

Revocation has to be part of the review

A lot of access review programs stop at decision capture. Reviewer says revoke. Then a separate admin, in a separate system, gets around to it later. That's the structural bug. If the revoke action isn't built into the review workflow, you haven't automated governance. You've automated notation.

This is where the importance of automation in access reviews becomes very concrete. If revoke decisions execute through the identity provider group mapping in the same system of record, the control actually holds. If they don't, you create drift. And drift is expensive.

One fintech team dealing with long-lived privileged access took the opposite route. They moved to time-bound access and automatic revocation, and cut privileged access by 85%. More than 1,300 access requests were automatically revoked after their approved windows. That's a big operational shift. But the deeper lesson is simpler: when removal is automatic, least privilege stops being aspirational.

Audit evidence should be a byproduct

You should not need a separate project to prove a control happened. That's the old world. A better model is what I call the Evidence-by-Default Rule: if a request, approval, review decision, and revocation all happen in one linked workflow, the evidence should already exist without screenshots.

This matters more than people think. Audit prep time is one of those hidden costs that leadership rarely sees until the quarter gets ugly. Somebody on the team burns days reconstructing a timeline from Jira, Slack, email, and exported files. The work happened. The proof didn't.

Want to see what this looks like when the system is set up right? See how Multiplier works

What Good Automation in Access Reviews Actually Looks Like

Good automation in access reviews doesn't mean removing reviewers. It means removing the manual glue work that sits around the reviewer. That's the version of automation that actually improves control. Human judgment stays. Friction goes.

If you want a practical model, use the 5-Link Review Chain: scope, assign, review, enforce, prove. If any link is manual for your critical apps, that's where your process will slow down or break. Not maybe. Usually.

Start with scoping and ownership

A review campaign should start with clean scope. Which apps are in. Who owns them. Who reviews them. What date matters. That's more important than most teams realize, because vague scope creates vague accountability.

I've seen this play out a hundred times in different forms. The app is in the campaign, but nobody clearly owns it. Or the manager is technically the reviewer, but the app owner has the actual context. Or the date exists, but nobody treats it as operationally meaningful. Then everybody acts surprised when reminders and escalations become manual.

If you have 10 or more apps in scope, assign reviewers before launch, not after. That's a hard threshold I'd use. Below that, you can still manage by exception. Above that, post-launch cleanup becomes its own project. And that defeats the point.

Put reviewers inside a decision environment

A good reviewer experience is boring in the best way. Open the task. See the user. See their groups. See last login. Decide. Move on. That's it.

This is where the importance of automation in access reviews really connects to completion rate. The lower the cognitive load, the faster decisions happen. In my experience, reviewers don't resist reviews because they hate governance. They resist them because the workflow feels like unpaid detective work.

Use this rule: if a reviewer needs more than 30 seconds to understand a single row of access, your review design is too heavy. That doesn't mean over-simplify it. It means package context better. Clean context beats long policy docs every time.

Enforce decisions in the same system

Once a reviewer marks revoke, the next best action is not an email. It's enforcement. Ideally through identity provider groups, because that's authoritative and auditable. If the entitlement lives in the IDP, the revocation should too.

This is the part teams often skip because it feels more technical. But it's not optional. It's the bridge between governance intent and governance reality. Without it, you're still depending on follow-through.

One AI company scaled from about 100 to over 400 employees in two years and had IT requests tracked in Slack and Notion. Notifications got missed. Provisioning was manual. After moving to a more automated model inside Jira Service Management and Okta, they processed 3,800+ access requests in a year, with 75% fully automated. A four-person IT team supported 420+ employees. That's not magic. That's what happens when the workflow is structured properly.

Use inactivity to sharpen review quality

Not every review needs the same weight. Some access decisions should be influenced by real usage. That's where login telemetry matters. If someone hasn't logged into an app for 90 days, that should shape the recommendation before the reviewer even opens the task.

I like the 90-30 Rule here. If a user hasn't logged in for 90+ days, show revoke as the recommended action. If your grace or exception logic needs to be tighter, use 30 days for high-cost or high-risk apps. You still let a human decide. But now they're deciding with signal, not just list fatigue.

That same logic is why license optimization and access reviews fit together better than people expect. Both are really asking the same question: should this person still have this entitlement? Different budget owner. Same operational truth.

How Multiplier Keeps Access Reviews Inside Jira

Multiplier keeps access governance inside Jira Service Management, which is a big part of why the workflow stays intact. Instead of splitting review work across a portal, spreadsheets, Slack threads, and manual cleanup, Multiplier ties the request, approval, review decision, and evidence back to Jira.

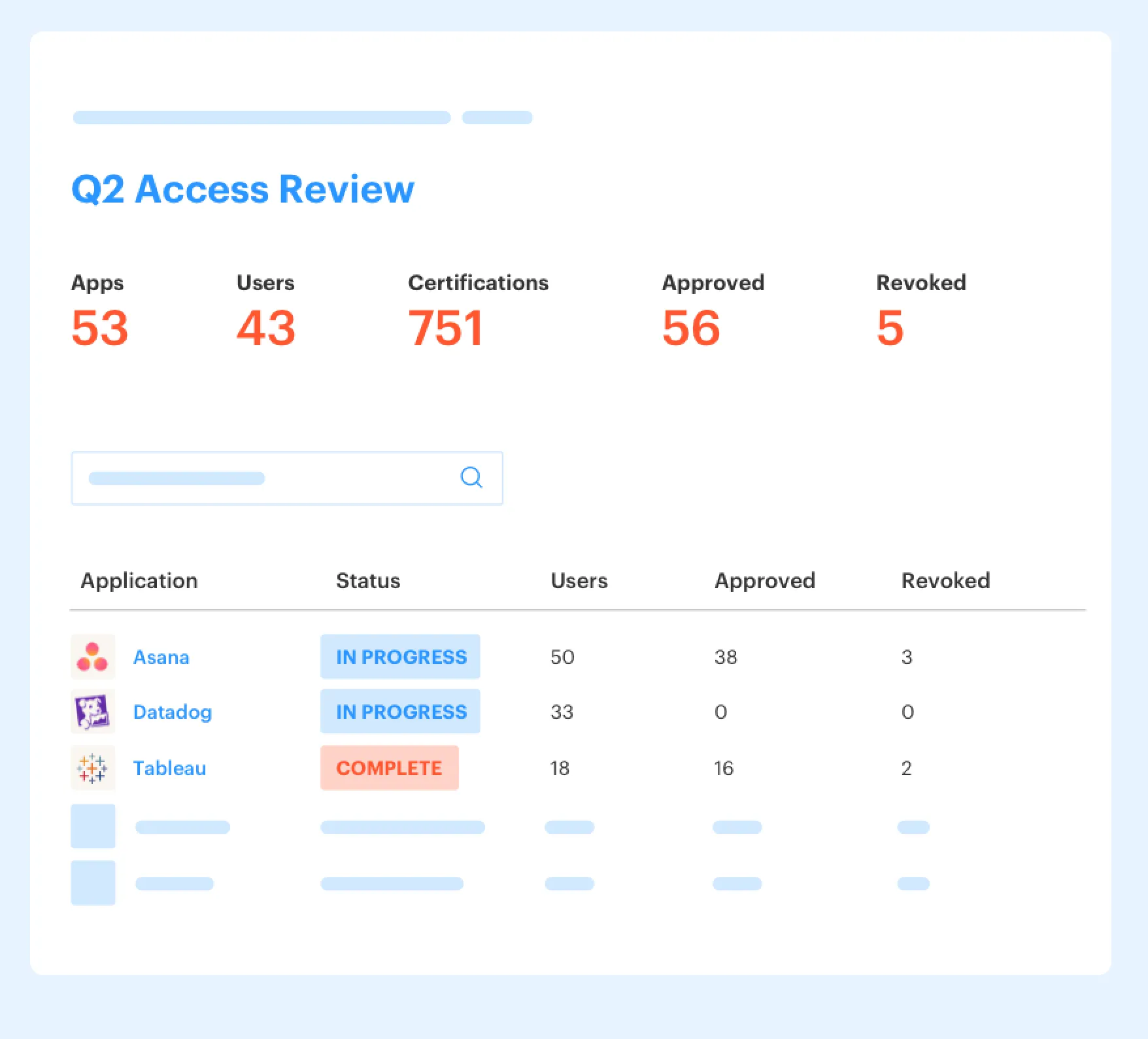

Access reviews with context and enforced revocation

Multiplier's Access Reviews run as a Jira-native campaign workflow. Admins create campaigns, select in-scope applications, assign reviewers, and launch from there. Reviewers land in a JSM Help Center dashboard and can see user attributes, groups, job titles, departments, last login dates, and recommendations before they decide to keep or revoke access.

That matters because it closes the Context Floor I mentioned earlier. The reviewer isn't staring at a dead spreadsheet. They're making a decision with enough context to be credible. And when they revoke, Multiplier can automatically remove users from the relevant identity provider groups, create Jira tickets documenting the change, and keep campaign progress visible as it moves. So the old Review-to-Revoke Gap shrinks fast.

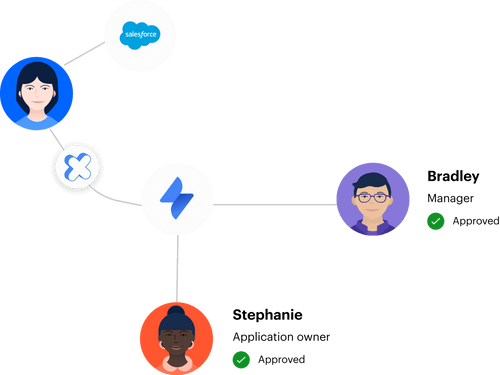

Approval and provisioning stay connected

Multiplier also ties Approval Workflows and Automated Provisioning together in the same Jira-based flow. Admins can route approvals to a manager, app owner, or specific user, and those decisions can happen in Jira or Slack. Once approved, provisioning happens through identity provider group mappings in Okta, Entra ID, or Google Workspace.

That's important for auditability. The ticket isn't just a request log anymore. It becomes the operating record. You can see who approved, what changed, and when the change executed. For teams that want temporary access by default, Time-Based Access adds duration choices like 1, 6, or 24 hours and removes the group membership automatically at expiry, as long as the access is provisioned through the identity provider group.

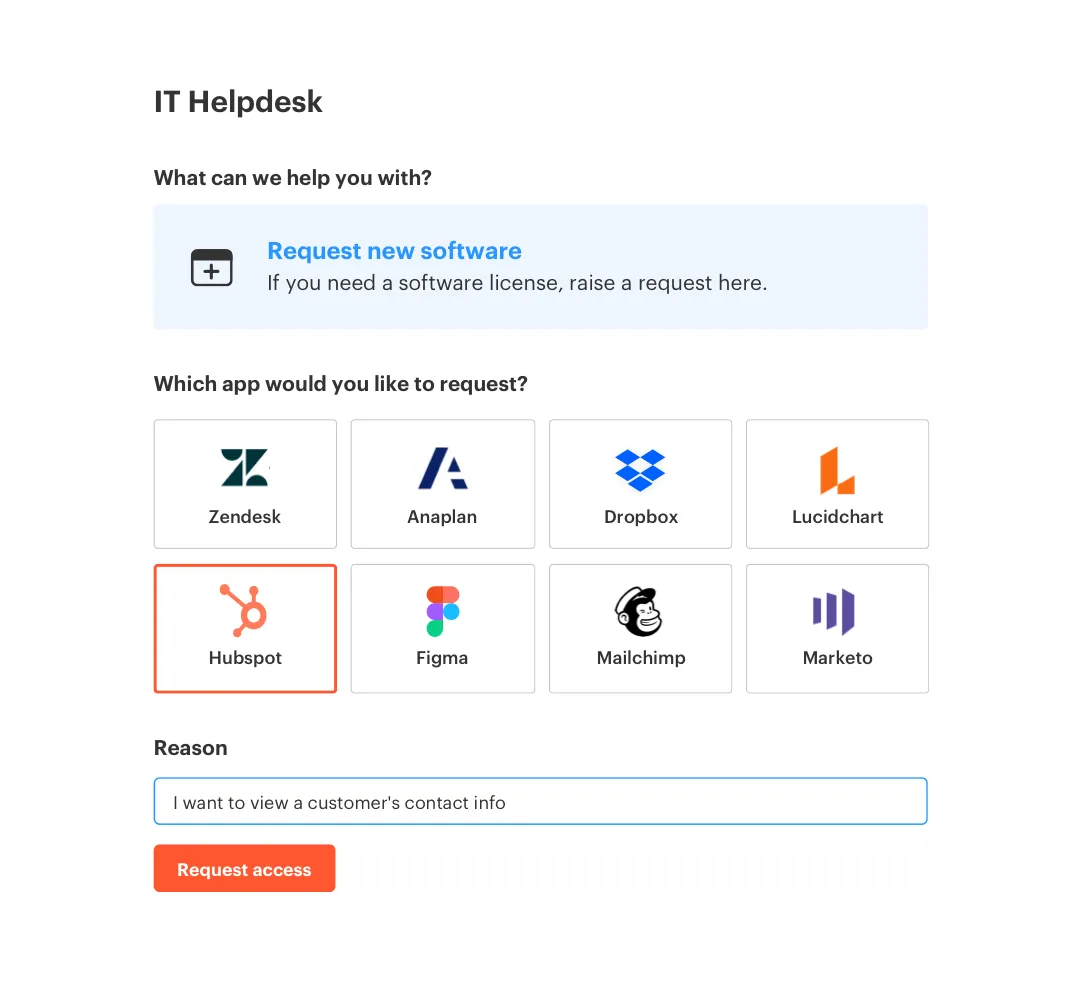

For teams trying to standardize intake first, the Application Catalog gives employees a Jira-native self-service experience in JSM or Slack, with approved apps and mapped roles. That cuts the random Slack pings and email requests before they become audit clutter. If you want to dig into the workflow in your own environment, Get started with Multiplier.

The Teams That Stay Audit-Ready Don't Rebuild Evidence

Audit-ready teams don't do heroic cleanup at quarter end. They design the workflow so evidence is produced while the work happens. That's really the importance of automation in access reviews. Not speed for the sake of speed. Control that actually survives contact with growth.

If your reviews still live in spreadsheets, start with one change: make revocation part of the same workflow as the review. Then bring context into the decision. Then keep the record in Jira, where the rest of the work already lives. That's the path.

Separate portals promise control. Embedded workflows usually deliver it.

Frequently Asked Questions

How do I set up access reviews in Multiplier?

To set up access reviews in Multiplier, start by creating a new campaign in the Access Reviews section of Jira. You'll need to select the applications you want to include, which must be marked as 'Approved.' Next, assign reviewers for each app—this can be the app owner, a manager, or a specific user. Finally, launch the campaign to notify reviewers. They will receive a dashboard showing user details, last login dates, and recommendations, making it easier to decide whether to keep or revoke access.

What if I need to revoke access quickly?

If you need to revoke access quickly, ensure that your access reviews are integrated into your existing workflows. With Multiplier, when a reviewer clicks 'Revoke,' the system can automatically remove access through your identity provider, like Okta or Google Workspace. This eliminates delays and helps maintain least privilege. Make sure your review process is set up to enforce revocations directly in the same workflow to avoid any lag.

Can I automate access requests with Multiplier?

Yes, you can automate access requests using Multiplier's Application Catalog. Employees can browse through a visual catalog of approved applications directly in Jira Service Management or via Slack. They select the app and role they need, and upon submission, a Jira ticket is automatically created. This streamlines the intake process and ensures that requests include the necessary context for approvals, reducing manual work for your IT team.

When should I consider using Time-Based Access?

Consider using Time-Based Access when you want to enforce least privilege for sensitive applications. This feature allows users to request access for a specific duration, such as 1, 6, or 24 hours. Once approved, Multiplier provisions access and automatically revokes it after the time expires, ensuring that access is only available when needed. This is particularly useful for high-risk roles or temporary projects, helping to minimize unnecessary access.

Why does my team struggle with access review completion?

If your team struggles with access review completion, it might be due to a lack of context for reviewers. To improve this, ensure that your access review process in Multiplier provides sufficient data points, like last login dates and group memberships, before reviewers make decisions. This way, they won't feel overwhelmed or unsure about their choices. Simplifying the review interface can also help speed up the process.

![Centralized Workflows for Faster Access Management [2026]](https://cdn.prod.website-files.com/60cc3b1de50f53117a9c8119/6a0f9eb8ffdd16d3206ab77a_centralized-workflows-for-faster-identity-provisioning-hero-1779408525848.jpeg)